Science

Indian Universities Are Obsessed With Research Metrics — And Science Might Pay The Price

Karan Kamble

Jan 05, 2026, 06:59 AM | Updated Feb 03, 2026, 07:16 PM IST

There was a physicist who just didn't publish enough papers, and this is being kind to him. When all the researchers at his university would get pulled up to present a list of their recent publications, he would respond with "none."

By his own admission, he had become "an embarrassment to the department when they did research assessment exercises."

"Today I wouldn't get an academic job," he told The Guardian en route to Stockholm to receive the 2013 Nobel Prize for science. "It's as simple as that. I don't think I would be regarded as productive enough."

This physicist was none other than Peter Higgs, perhaps twenty-first-century science's most famous figure. The man behind the particle which, to his dislike, got coined the "God particle," but came to be better known, perhaps more pleasingly for him, as the Higgs boson.

He said he couldn't see himself replicating his 1964 groundbreaking work "in the present sort of climate," where publication volume was paramount.

The Higgs field was proposed in 1964 as a new kind of field that fills the entire universe and gives mass to all elementary particles. The Higgs boson is a wave in that field. The discovery of the particle at the Large Hadron Collider at CERN (the European Organisation for Nuclear Research) confirmed the existence of the Higgs field nearly five decades later.

Higgs' comments about the research climate are from 2013. Since then, the grip of various metrics (the number of papers published, h-index, citation count, impact factor, and so on) has only tightened, increasingly suffocating academics who are forced to walk a tightrope between quantity and quality in scientific research.

Higgs, though, is just one name. Other great scientists who would likely similarly suffer in the current climate of high and particular research output expectations include Gregor Mendel, Ludwig Boltzmann, Claude Shannon, Kurt Gödel, and even our very own Srinivasa Ramanujan.

Ramanujan worked largely in isolation, far from the collaboration compulsions of the present era, perhaps in part due to the very different communication paradigm of his time. He likely had no journal strategy, nor a pipeline. His publication count was low, with many of his results appearing as letters and conjectures. He would score poorly on h-index, publication count, and journal prestige, all marks of good research today. Yet, he is easily one of the greatest mathematicians to have ever lived.

"Some people don't understand excellence. They need numbers," says Professor Amitabha Bandyopadhyay of the Indian Institute of Technology (IIT), Kanpur. "They are very comfortable when you say five is more than four. Because they don't really understand excellence."

This begs the question: what kind of science does a metrics-driven system select for, and what kind does it quietly eliminate?

When Metrics Become Targets

Bibliometrics is the term used to describe the application of statistical methods to analyse publications. In academia, these analyses are typically used to quantify and track researcher output and impact.

Though bibliometrics originated in the late nineteenth century, they have become increasingly relevant today through their many expressions: h-index, impact factor, citation count, and other rankings.

The h-index, for instance, looks at how many publications an author has and how many citations those publications have received. An h-index of 10 would mean an author has 10 publications that have received 10 citations or more. The Journal Impact Factor (JIF) is meant to approximate how often papers in a journal are cited, often treated, incorrectly, as a proxy for quality. An impact factor of 5 would mean that a typical paper from that journal has received five citations.

As is easy to see, these metrics are useful. They help with quickly getting a sense of the value and reach of research through its published expression. But where they get problematic is when, rather than aiding evaluation, they begin to replace judgement altogether.

Suppose an institute is hiring a faculty member and sets a particular h-index as the criterion and little else, without considering how publication norms vary across fields, whether the subject being researched is mainstream or at the edge of knowledge, and how either inevitably affects publication and citation counts. The odds of hiring the best talent will be dim.

"If you say you want to hire people who have 15 publications or above," a physicist at a top Indian institute, who didn't want to be named, explains, "and then a guy comes in from Stanford or Caltech (California Institute of Technology) with only five papers; by that criterion, he doesn't make it. But in quality, he is maybe far superior. This is the problem."

Metrics don't often reveal the full picture. "When a measure becomes a target, it ceases to be a good measure," British economist Charles Goodhart wrote in 1975 in the context of monetary policy, an utterance that has since become known as Goodhart's Law. This adage reflects the reality of overreliance on metrics in academia.

Lazy science administrators have come to rely greatly on metrics because they come dressed as 'objectivity', for which scientists carry great fondness. "Everybody has been going gung-ho about what they call an objective way of judging people," Rajeeva Laxman Karandikar, one of India's most respected statisticians, says. "Citations, h-index, journal impact factors, all these have become ultra-important. And that is just not acceptable, in plain and simple words. Experts must read and understand a paper to judge the same, not go by bibliometrics."

Numbers may feel objective, he argues, but the deeper question is whether the system is optimising the right thing at all. "Yes, it's 'objective' because you are going by numbers," he says. "But have you started with the right objective function to maximise?" he asks me after a polite enquiry about my mathematics background.

"Once you set a goal, people try to achieve the goal," says the physicist. "That works in industry. But academia is supposed to be creative. When you force it into targets, numbers start mattering more than ideas."

Prof Bandyopadhyay is more direct: "These metrics are the worst thing!" He argues that "anyone who is good at science will tell you, these metrics are what are killing science, not only in India but throughout the world." The problem is compounded when officials, far removed from the nitty-gritty of scientific work, drive the overreliance on metrics to judge science.

In an October 2025 paper in the Notices of the American Mathematical Society, a global group of senior mathematicians posed blunt questions: Do more citations mean better science? Do more papers mean a better scientist? And, crucially, do metrics change how researchers behave?

This last question speaks to an important implication of the undue emphasis on bibliometrics: when there are specific metrics for a researcher to ace, they can resort to gaming the system in pursuit of successful outcomes.

In the authors' view, the answer to that final question is yes, and generally for the worse. "Specifically, there is very good evidence that some individuals, groups, institutions, and editorial boards are conspiring to tailor their publication behaviour to manipulate rankings made on the basis of bibliometric analysis," they write.

This is a worldwide phenomenon. And though this was not how Indian academia traditionally operated, it has increasingly begun to do so in recent years.

The Indian Turn to Numbers

Today, numbers exert an outsized influence over academic life in India. Over the past decade, numerical indicators of research output have come to occupy a central place in how universities are judged, how faculty are evaluated, and how institutional prestige is constructed.

The most visible expression of this shift is the National Institutional Ranking Framework (NIRF), India's flagship university ranking system, introduced in 2015. Today, NIRF rankings shape public perception, institutional ambition, and, in many cases, internal decision-making.

Research accounts for about a quarter of an institution's overall score, with publication counts and citation numbers forming a significant component. In practice, this has meant that research output, quantifiable, comparable, and easily auditable, has become a primary currency of academic success.

Part of the problem, Dr M Vidyasagar argues, is that Indian administrators lack the courage to challenge Western ranking systems. "Can we cock a snook at, say, the Times ratings and say we will do our own rating? No, we can't," says the Distinguished Professor at IIT Hyderabad and former SERB National Science Chair. He contrasts this with China, which created its own ranking methodology and applied it globally, showing Chinese universities in a different light. "The Chinese said, well, we think the Times ratings are irrelevant. So we have made up our own ratings. It's totally open source. They published the ranking of all universities in the world, including but not limited to Chinese universities. And they showed that Chinese universities actually do quite well."

Indian officials, several researchers suggest, are reluctant to take such steps, largely because they "are really scared to upset the Americans." "That would jeopardise the babus' prospects of settling their kids abroad," one scientist suggests.

The effects of the emphasis on established metrics are visible in the numbers. India's research output has grown at a staggering pace. By some estimates, India now ranks among the top four countries globally in terms of total research publications. The volume of papers produced by Indian institutions has increased year after year, with universities, both public and private, actively encouraging faculty to publish more, and more often.

Yet this surge in quantity has not been accompanied by a commensurate rise in impact. While India's share of global publications has grown rapidly, its share of global citations has lagged behind. In global comparisons, India often ranks several places lower in citation impact than in raw output. In other words, Indian researchers are publishing more papers than ever before, but those papers, on average, are cited less frequently than those produced in many other major research economies.

Citations, imperfect as they are, tend to accumulate around work that reshapes a field, opens new questions, or provides tools that others find indispensable. When citations fail to keep pace with publication counts, it suggests a system optimising for visibility rather than significance.

The pressure to publish is felt most acutely at the level of individual careers. Across universities, publication requirements have become embedded in hiring criteria, promotion rules, and performance assessments. Faculty members speak of numerical thresholds (minimum numbers of papers, minimum impact factors, minimum h-indices) that must be met before other aspects of a researcher's work are even considered.

The result is a culture of performative compliance. "I hear from many organisations that just before an inspection they do many things," says Dr Vidyasagar. "There is a huge group working on how to score marks and points and things like that. I don't think this improves the quality of teaching or education."

Well-resourced institutions, he adds, often respond not by recruiting better scholars, but by learning how to game the system. "The rich institutes can actually afford to do many things. But they are not actually recruiting talented teachers or professors. Instead they are making the institute glamorous."

The absurdity has reached new heights in some cases. Prof Karandikar points to instances where institutions with questionable research credentials have appeared in the top ranks of major research listings. "It has reached a ridiculous proportion," he says.

This has predictable consequences. Research that is risky, slow, or conceptually difficult becomes harder to justify. Incremental work, easily publishable and quickly counted, becomes the safer option. Collaboration decisions, journal choices, and even research questions risk being shaped by how efficiently they convert effort into points.

Much of this behaviour is rational too. If the benchmarks for individual researchers and institutes are in numbers, then their response will be numerical too. Metrics become incentives, and incentives, in turn, shape behaviour.

It is within this context that the darker consequences of metric-driven evaluation begin to emerge.

When Incentives Turn Toxic

Over the past decade, researchers and scholarly bodies worldwide have documented a sharp rise in practices aimed more at improving measurable performance than advancing knowledge. One can find excessive self-citation, citation clubs, salami-slicing of results into multiple papers, and the proliferation of journals whose primary function is to publish quickly rather than rigorously.

"It puts pressure on scientists, and hence, they don't worry about quality and concentrate on just quantitative things," says Prof V Ravindran, who was, until October 2025, the director of the Institute of Mathematical Sciences (IMSc) and now serves as Senior Professor. As a reviewer, Prof Bandyopadhyay notes that in paid journals, "quality is the last thing on their mind. The more papers published in their journals, the more money the journals will get."

"This is not happening much in the older IITs," the physicist who didn't want to be named adds. "But in newer institutions, where metric-based lists are used for promotions and awards, quality inevitably suffers."

Prof Karandikar is blunt about the scale of the problem: "India ranks very high on the misuse factor."

The mathematical sciences are a particularly interesting case. Mathematics papers tend to have long gestation periods, low citation counts, and influence that unfolds over decades rather than years. When such a discipline is forced into frameworks designed for faster-moving fields, distortions appear quickly.

"The impact on mathematics worldwide has been disastrous," says Prof Karandikar, who has served on committees of the International Mathematical Union, the body that awards the Fields Medal. "It became so apparent how badly citations were being misused that the main company doing citation rankings eventually exited mathematics altogether."

Indeed, Clarivate significantly curtailed the use of citation-based rankings in mathematics after widespread distortions and manipulation became apparent.

Prof Karandikar witnessed the breaking point: "The main entity which does the citation business declared they are exiting maths because in maths it is being misused. So they won't do the citation business for maths anymore. The deeds were getting exposed."

Similarly in India, as publication and citation counts have become embedded in institutional assessments, demand has grown for venues that promise speed, certainty, and numerical returns. This demand has been met by an expanding market of low-quality and predatory journals, many of which aggressively solicit submissions, offer minimal or nonexistent peer review, and guarantee publication for a fee. What's worrying, however, is that the problem extends to even ostensibly legitimate journals.

We are then left with a system that prefers to select for those most adept at navigating metrics, rather than those best equipped to pursue difficult or unconventional questions.

The Administrative Shortcut

Why has the system moved so decisively in this direction?

Part of the answer lies not in malice, but convenience. Evaluating research is hard, time-consuming, and requires domain expertise. Metrics offer a shortcut. "All these metrics are a tool used by lazy administrators," says Dr Vidyasagar, a phrase he applies not only to bureaucrats, but to academics as well.

He gives a concrete example: "Suppose somebody applies for a faculty job. Nowadays you get 200-300 applications. Even if you weed out all the obviously irrelevant and unqualified people, you will still be left with maybe 40, 50, or 60 applications that you need to read. So what is the easiest way of avoiding reading them? You just say we're going to seek only those people who have an h-index of 20 or who have these many citations or these many publications. Anything to avoid investing time in evaluation."

Once embedded, such shortcuts become self-reinforcing. Researchers and institutions adapt and get on board. Entire ecosystems grow around improving scores.

Prof Ravindran points to what he calls "a big mockery of the entire education system", newer institutions boasting about faculty listed in international citation databases. "You don't see any TIFR (Tata Institute of Fundamental Research) or IISc (Indian Institute of Science) Bengaluru scientist in these lists. It's generally people from substandard institutes there."

This problem is not uniquely Indian. But India's scale, and its rapid expansion of higher education, amplifies the effects. When promotion, funding, and prestige hinge on numerical output, it is unsurprising that paper mills, predatory journals, and citation games proliferate.

The Indian Case: Retractions, Rankings, and Market Pressures

One of the clearest signals of stress in the Indian research ecosystem is the rapid increase in retracted papers. Between 1996 and 2024, India accounted for 5,412 retractions of research articles, an average of about 1.8 retractions per 1,000 publications, according to an analysis of the Retraction Watch Database by India Research Watch.

Nearly half of these were classified as serious misconduct, including plagiarism and data manipulation, with others tied to fake peer review and flawed research practices.

India now ranks second only to China in absolute numbers of retractions globally. In China, more than three retractions occur per 1,000 publications; in India, the figure is about two per 1,000, significantly higher than in countries like the United States, where it is less than one per 1,000.

The spike in retractions has been especially sharp in the past few years. In 2022 there were 1,212 retractions, followed by 866 in 2023 and 860 in 2024. These numbers suggest a system under growing strain, where the pressure to produce quantifiable outputs has begun to overwhelm considerations of rigour and integrity.

In response, the NIRF is introducing negative marking for institutions with large numbers of retracted or low-quality research publications. Penalising flawed science marks a significant shift from crediting institutions exclusively on the basis of positive, metric-based research output. It reflects a growing recognition that quantity without quality can distort ranking outcomes.

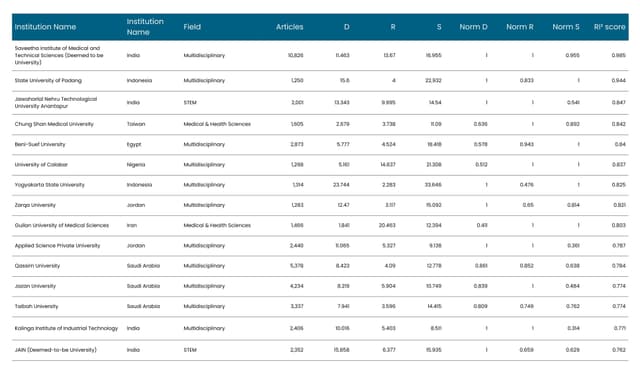

The retraction issue is compounded by the broader mismatch between India's research volume and its influence. Between 2020 and 2025, India contributed over 16 lakh papers to Scopus-indexed journals, more than most European countries combined, yet it ranks much lower in impact metrics such as the national h-index, a measure combining output and citation performance.

The pressure to produce measurable research outputs also feeds a marketplace of low-quality publication venues. Questionable journals have been identified and delisted from major indexing services as part of efforts to preserve integrity. Scopus, for example, conducts regular quality reviews of indexed journals, and many titles are discontinued each year when they no longer meet editorial and indexing standards. Over recent years, hundreds of journals have been ultimately removed from Scopus coverage as part of this ongoing quality control process.

Predatory publishing, journals that guarantee publication for a fee without meaningful peer review, has also caught the attention of Indian researchers and policymakers. These outlets aggressively solicit submissions and often fabricate impact factors, providing an easy route to boost publication counts at the expense of rigour.

The University Grants Commission (UGC) responded in 2018 by removing 4,305 dubious journals from its approved list after complaints about their credibility. This was an attempt to reduce incentives for publishing in outlets with questionable standards. Thousands of crores are said to be spent annually by Indian scientists on journal publication fees, money that Prof Bandyopadhyay argues could instead build "outstanding Indian journals" with genuine quality control.

So we are in a situation where, on the one hand, Indian research output, and the global footprint of Indian scholars, has grown impressively, while on the other, there is increasing evidence that publication practices in parts of the system are shaped more by rankings, point accumulation, and institutional incentives than by the pursuit of enduring knowledge.

The good news, though, is that the moves by NIRF and UGC to introduce penalties for low-quality work suggest that policymakers are beginning to recognise that more is not always better.

"A true scientist doesn't feel satisfied by publishing 10 papers in a year," Prof Ravindran observes. "I can quote many scientists who have very few papers, but they are all very quality papers."

The physicist points to a recent example: a 2025 Nobel laureate in medical sciences "has hardly a few papers, very normal achievements," referring to Mary E Brunkow, one of three winners of the 2025 Nobel prize in physiology or medicine. She is said to have published just 34 papers. "So, ultimately, it has to be the quality of innovation more than any number." British biochemist Frederick Sanger is perhaps a more extreme example with two chemistry Nobel prizes in his kitty even with a modest publication count of about 70 over a four-decade-long career.

"When a scientist writes a paper," Prof Ravindran says, "it is not about publishing in high-impact journals. It is about doing good work which gives him recognition at the international level and satisfaction that he has contributed to that area in a big way."

The danger, then, is not that metrics occasionally misjudge excellence. It is that, over time, they begin to select against it. Prof Ravindran puts it starkly: "Research should be left alone, not punished."

Excellence Takes Time

Building genuine research and institutional prestige takes time. "Perception takes 30, 40, 50 years to build," Dr Vidyasagar notes. "So you're not going to build perception in five years."

He points to an instructive historical example. In the 1930s, mathematician Norbert Wiener faced two job offers, one from the Massachusetts Institute of Technology (MIT), the other from Lehigh University in Pennsylvania. In his autobiography, Wiener wrote that he was torn between them because both were virtually at the same level.

"Can you imagine?" Dr Vidyasagar asks. "Back in the 1930s, MIT and Lehigh were at the same level. How did MIT become MIT?"

His answer: a sustained, decades-long commitment. MIT threw itself into wartime research during the Second World War, doing profound work in signal processing and related fields. By the 1960s it was a leading institute. "It's like putting your foot on the gas pedal for 30 years and not releasing it. But somehow our people lack the patience because they want to show some results very quickly."

Patience is key, something rankings are structurally incapable of rewarding. Building strong research cultures takes decades. It requires tolerating uneven output, backing long-term bets, and accepting that not all valuable work announces itself quickly or loudly.

Is There a Way Out?

Metrics did not emerge from nowhere, nor are they entirely dispensable. "If you don't have any metric-based ranking," says Prof Manindra Agrawal, director of IIT Kanpur, "there is literally no way for policymakers to decide how to allocate resources."

He elaborates on the dilemma: "The government has to decide. I have Rs X to give. How much should I give to which institution? Should I equally distribute it, or should I distribute it as per some formula? And obviously they would like to give more funds to those who are doing well, to reward them for doing well. But the moment you start measuring it, all these issues arise."

Governments must make comparative decisions, and comparison requires simplification. "There's no perfect solution," he adds. "Any time you want to do such comparative evaluations, you have to distil everything down to a single number because that's what you can compare. And when you distil everything down to a number, of course there will be problems in the process."

Prof Agrawal represents probably the pragmatist's position, acknowledging that metrics serve a genuine administrative function, even as they create distortions. But he also believes enforcement matters: "What we need is, make this process very open, very transparent; say this is the process we are following, and also make it very clear that if somebody is caught manipulating it, a strong penalty will be given. Catch them and make some institutions an example, so that it deters others from trying to manipulate."

Prof Bandyopadhyay, by contrast, has other ideas. He would create a carefully curated list of high-quality journals in each field, perhaps 20 journals that genuinely maintain rigorous standards. "You publish in those journals once or twice, every year or every two years, and I am happy with that," he says. "Because I know that the quality is there."

He would also block the use of government funds for publication fees in questionable journals: "You can pay and publish, but you can't use government money for that payment. Then all this will stop automatically."

And he would redirect a fraction of the crores spent annually on publication fees towards building strong Indian journals. "And then, as an administrator, I would have confidence that if a paper gets published here, it must have quality. Then you get away from all these metrics."

Across interviews, there is broad agreement on one point: metrics can be guides, but they cannot be goals. "It can be a guideline," says Prof Ravindran. "It cannot be imposed."

Used cautiously, with disciplinary sensitivity and human evaluation layered on top, they may help. Used mechanically, as universal yardsticks, they distort incentives.

The path forward may lie in Prof Bandyopadhyay's vision: curated journal lists, reinvestment in Indian publishing infrastructure, and restrictions on how public funds support dubious outlets. It may lie in Dr Vidyasagar's patience: the willingness to commit to 30-year institutional journeys without demanding immediate returns. It may lie in Prof Agrawal's transparency: making evaluation criteria explicit and enforcing penalties for manipulation.

Or perhaps it lies in Prof Ravindran's simpler observation, one that cuts through all the rankings and indexes and impact factors: "A good scientist will always survive without all these metrics. He will disappear if he doesn't do good work. He can survive with all these metrics, but he will disappear if, although metrics exist, he doesn't do good work." This philosophy bears out strikingly in the cases noted above of Nobel laureates with low publication counts.

Good science, in other words, has a way of making itself known, with or without the right numbers attached. The question is whether we're willing to create the conditions that allow it to emerge, even when it doesn't announce itself through the channels we've learned to monitor.

The work that changes everything doesn't often appear first in a spreadsheet. Yet it endures long after the rankings are forgotten.

Karan Kamble writes on science and technology. He occasionally wears the hat of a video anchor for Swarajya's online video programmes.